Testing different variations is one of the quickest ways to learn, and Digital Advertising is no exception. When you begin talking about A/B testing, many of us get nervous and all sorts of possible catastrophes begin swimming around in our heads.

But the truth is, advertising is a quickly evolving tactic, and in order to gain momentum, testing has become a necessity. Whether your advertising is social, display, native or search, the key to better results and return on investment is testing.

To help you navigate the sometimes-confusing world of digital advertising testing, we’ve provided some helpful resources below.

What is A/B Testing?

An A/B Test is simply a test between two variants, a control and a variation, or as Google likes to call it, an experiment. Examples might include creating two different:

- Landing Pages

- Assets

- Ads

How to Approach an A/B Split

An A/B split needs to be shown to the exact same audience, during the same times of day, same days of the week and in the same areas in order for you to have a firm grasp on which variation is truly performing better.

It’s important to have a firm grasp on your data set or audience so that you can split the information and create an equitable ad deliver. You’ll find that most platforms have options for testing including, Google AdWords, Analytics Experiments, Optimizely and Unbounce.

Considerations for Length & Time of Testing

In order to measure success, you need to make sure you’re giving you tests enough time to run. We typically recommend running tests at a minimum of 2 weeks with most tests running for 1 month.

Volume

How much data is required for you to have statistically significant results? If your program sees low volumes (clicks, impressions, conversions), you need to run longer tests. If you have a high volume account you can call tests much faster.

Seasonality

If you have drastic peaks and lows due to seasonality, I suggest finding the middle ground for testing. I never recommend testing during a seasonal low unless you dealing with a high volume account.

Day of Week/Month Activity

Make sure that both groups have equal coverage during your peak days and weeks. Again, try to avoid running tests during your low periods, especially if you see significant swings in CTR and conv. rates.

It’s also essential to make sure that your test is going to have impact. Sometimes slight copy variations and image changes aren’t going to tell you much. You want to make sure the variant has a very clear goal and hypothesis. For example, by changing a button from “Download” to “Get My Guide”, I’m hoping to see a 10% lift in conversions.

What Types of Digital Advertising A/B Tests Should You Try?

When we implement digital advertising tests, they are directly tied to helping to meet program goals. Depending on the goals for your digital advertising program, we recommend launching the following types of tests:

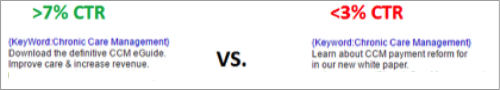

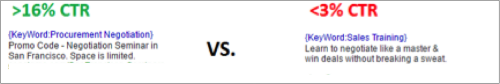

Message Testing:

Test your messaging simple ad variations such as:

- Length of Message

- Promotional based Messaging vs. Informational Based Message vs. Benefit Messaging

- Various Ad Extensions

Changing a single word within your message normally doesn’t lead to strong insights so make sure there is substantial change.

Below you’ll find a couple of examples where we have tested different messaging tactics for significant engagement improvements.

Promotional Based Text Ads Driving Significantly Higher CTR’s Than Benefit Messaging:

Benefit Based Text Ad Driving Significantly Higher CTR’s Than Information Messaging:

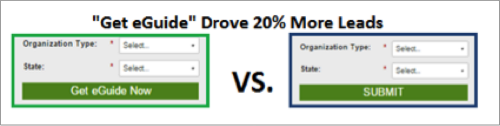

Landing Page Element Testing

Testing different elements on your landing pages can lead to surprising results. Whether it’s the layout, form fields or buttons, it’s always important to experiment.

Below you’ll see a simple button label change driving a major improvement in lead volume!

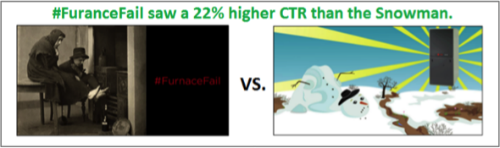

Imagery Testing

For social posts, display channel and landing pages, it is always good to test a couple of contrasting images, themes and concepts.

Especially if you have multiple audience personas, you’ll want to know what type of visuals and graphics resonate best with your audience. Do you need to be straighter forward, does humor resonate with your audience, and does your audience like to see the product? Are certain concepts better for social than display? You’ll discover these answers via testing.

Here’s an example of a test we ran for a social campaign. #FuranceFail had a humorous twist that resonated much better with our social audience.

Make Informed Testing Decisions

Again, make sure you’re testing has purpose, a hypothesis and a goal. If you’ve put serious thought into your A/B tests you won’t be disappointed. While the outcome may not have been desired, now you know and knowledge is power.